The rapid rise of advanced artificial intelligence has sparked a growing debate in Washington and across the technology sector: should governments ultimately take control of artificial general intelligence (AGI)? The discussion resurfaced after OpenAI CEO Sam Altman raised the possibility that if AI systems become powerful enough to influence global security or economic stability, governments may feel compelled to nationalize or directly control them. As AI capabilities accelerate toward systems that could rival human reasoning across many domains, policymakers are beginning to consider whether AGI should be treated more like critical national infrastructure than a commercial product.

“It has seemed to me for a long time it might be better if building AGI were a government project,” Sam Altman publicly mused this past Saturday evening.

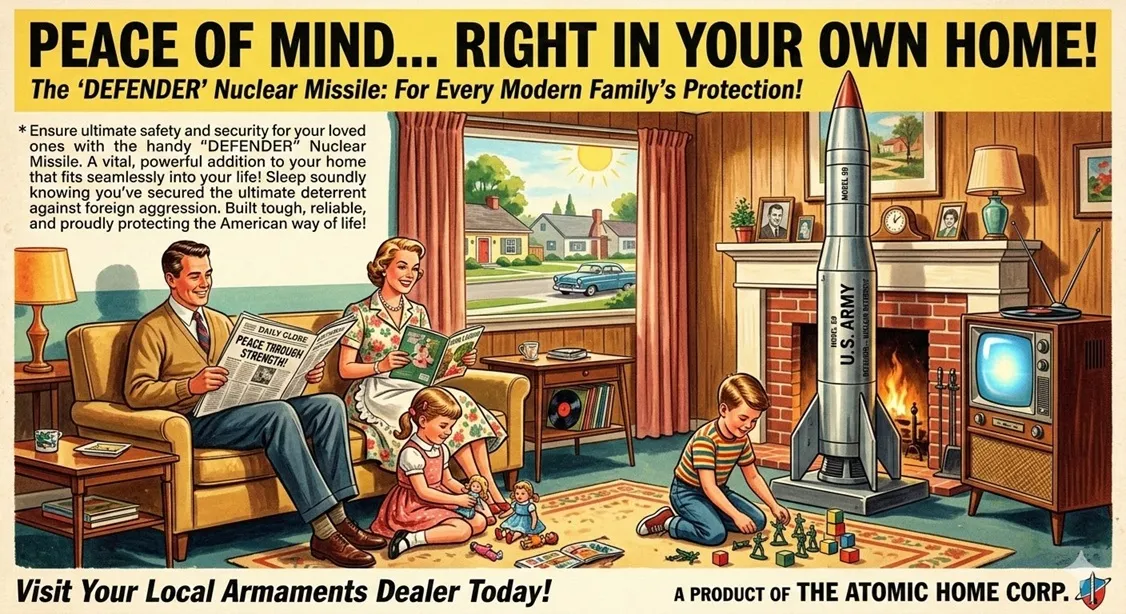

The issue has become particularly relevant as frontier AI companies increasingly collaborate with government agencies, including defense and national security institutions. Partnerships between AI labs and governments are already emerging to support cybersecurity, intelligence analysis, and military planning. These relationships have prompted a deeper conversation about where the boundary lies between private innovation and state control. Some experts argue that AGI could become so strategically important that leaving it entirely in private hands may pose risks comparable to allowing private actors to control nuclear technology.

Supporters of potential government oversight believe that AGI could reshape economies, geopolitical power, and even the structure of societies. If such systems were capable of autonomous research, advanced planning, or controlling critical infrastructure, governments might see nationalization or strict regulation as necessary to prevent misuse or concentration of power among a small group of technology companies. In this view, AGI would represent a strategic resource similar to nuclear energy, advanced semiconductors, or national defense systems.

“If Silicon Valley believes we’re going to take everyone’s white collar jobs…AND screw the military…If you don’t think that’s going to lead to the nationalization of our technology—you’re retarded” – Alex Karp, CEO of Palantir

Critics, however, warn that government control over advanced AI could slow innovation and concentrate too much technological authority in the hands of the state. Some technologists argue that open collaboration between private companies, academia, and public institutions has historically produced faster and safer technological progress. Others fear that nationalizing AGI could lead to an international race among governments to secure AI dominance, potentially accelerating military applications rather than limiting them.

The debate ultimately reflects a deeper question about the future governance of artificial intelligence. As AI systems move closer to general-purpose intelligence, the balance between private sector innovation and government oversight will become increasingly important. Whether AGI remains in the hands of technology companies or eventually becomes a form of state-controlled infrastructure may become one of the defining policy decisions of the AI era.

Reference: Original article from The New Stack:

https://thenewstack.io/openai-defense-department-debate/