When DeepSeek exploded onto the global AI scene in early 2025 with its open-source R1 model, it caught much of the industry off guard. A relatively unknown Chinese startup had managed to release a model that rivaled some of the most advanced proprietary systems in the United States. It challenged the assumption that only trillion-dollar American companies with enormous compute budgets could compete at the frontier.

Many observers expected the momentum to fade, but DeepSeek has now returned with DeepSeek-V4, a significantly more advanced system that some in the industry are calling the “second DeepSeek moment.” The company is no longer simply proving it can compete with global leaders. It is now attempting to reshape the economics of the entire AI market.

Why DeepSeek-V4 Matters

DeepSeek-V4 is reportedly a 1.6-trillion parameter Mixture-of-Experts model designed to deliver strong reasoning, coding, long-context understanding, and agentic task performance while remaining highly efficient. Rather than activating the entire model on every request, only a smaller subset of parameters is used at a time, reducing inference costs dramatically while maintaining strong capability.

That architectural decision is important because AI is increasingly becoming a scale business. Once companies move beyond experimentation and begin deploying AI across customer service, internal operations, coding, research, and workflow automation, token costs become highly significant. A model that is slightly weaker in some areas but substantially cheaper can quickly become the preferred enterprise choice.

This is why DeepSeek-V4 matters strategically. It represents a serious attempt to make advanced AI commercially practical at scale rather than simply technically impressive.

The End of Premium AI Pricing?

For much of the last two years, the AI market has operated like luxury retail. If organisations wanted the most advanced capabilities, they paid premium prices to providers such as OpenAI or Anthropic. Developers accepted those costs because lower-priced alternatives were often materially weaker.

DeepSeek is now challenging that assumption. Reports suggest DeepSeek-V4-Pro pricing is dramatically lower than premium Western frontier systems while still offering near-frontier capability in many scenarios. That changes the cost-benefit equation for businesses running large inference workloads.

If a company can automate coding assistance, support agents, research workflows, or internal operations at one-sixth the cost, then projects previously considered too expensive suddenly become viable. This is how markets are often disrupted—not only through better technology, but through superior economics.

DeepSeek does not need to dominate every benchmark to succeed. It only needs to be strong enough, cheap enough, and scalable enough to become the rational business choice.

Benchmark Wars vs Real Business Value

The AI industry remains heavily focused on benchmarks, but enterprises usually care more about outcomes. They are less concerned with who wins isolated leaderboard tests and more interested in whether a model can write reliable code, summarize complex datasets, power internal copilots, handle large documents, and run efficiently at scale.

If the answer to those questions is yes, then DeepSeek becomes a serious commercial contender regardless of whether another model scores slightly higher on academic tests.

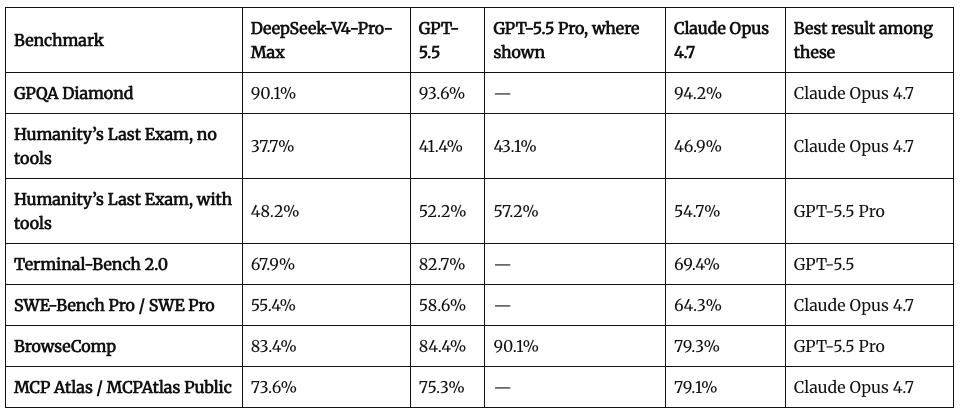

DeepSeek-V4-Pro-Max’s best showing is on BrowseComp, the benchmark measuring agentic AI web browsing prowess (especially highly containerized information), where it scores 83.4%, narrowly behind GPT-5.5 at 84.4% and ahead of Claude Opus 4.7 at 79.3%. On Terminal-Bench 2.0, DeepSeek scores 67.9%, close to Claude Opus 4.7’s 69.4%, but far behind GPT-5.5’s 82.7%.

This mirrors what happened in cloud computing, where customers did not always choose the absolute best infrastructure. Instead, they selected platforms that balanced price, performance, reliability, and flexibility. AI now appears to be entering that same stage of market maturity.

Open Source as a Strategic Weapon

Perhaps even more important than price is DeepSeek’s licensing strategy. DeepSeek-V4 has reportedly been released under the MIT License, one of the most permissive open-source licenses available. That allows developers and enterprises to use, modify, and deploy the model commercially with minimal restrictions.

This stands in contrast to many so-called open-weight competitors that still maintain significant licensing limitations. That distinction matters because true openness gives organisations more control over their long-term future.

Companies can self-host models, fine-tune them, audit them, customize them for internal use, and avoid becoming dependent on a single API vendor. In a world where AI may power core operations, that level of sovereignty becomes increasingly valuable.

The Sovereign AI Era Is Emerging

There is also a geopolitical story unfolding beneath the surface. DeepSeek’s reported optimisation across multiple hardware environments—including systems beyond NVIDIA’s GPU ecosystem—signals something larger: the rise of sovereign AI stacks.

Countries, corporations, and public institutions are beginning to recognize that relying entirely on foreign chips, foreign clouds, and foreign AI APIs may carry strategic risk. The next generation of buyers may not only ask which model is smartest, but also where it runs, who controls it, whether sanctions could disrupt access, and whether they can own the infrastructure themselves. DeepSeek appears to be positioning itself for that future.

Pressure on OpenAI, Anthropic, and Google

None of this means U.S. AI leaders are finished. Closed frontier systems still lead in many categories and often provide stronger tooling, polished ecosystems, enterprise support, and trusted brand relationships.

However, DeepSeek is forcing a market reset. Premium pricing now requires premium justification. If open models continue narrowing the performance gap while maintaining aggressive pricing, major providers may need to compete more heavily on reliability, security, workflow integration, ecosystem depth, enterprise compliance, and customer trust. Raw intelligence alone may no longer be enough to justify premium economics.

What This Means for the AI Industry

DeepSeek-V4 suggests the AI race is entering a new phase. The first phase focused on who could build the smartest model. The second phase may focus on who can deliver intelligence cheaply, openly, and at global scale.

That shift could accelerate adoption across startups, mid-sized enterprises, emerging markets, and governments that were previously priced out of frontier AI access. If that happens, the market could become far larger overall, but far less profitable for incumbents that depend on premium pricing.

Final Thought: Scarcity Is Harder to Defend

DeepSeek-V4 is more than a product launch. It is a reminder that in technology, dominance becomes fragile when competitors can replicate capability and undercut price. The future of AI may still reward technical brilliance, but it may reward accessibility even more. If intelligence becomes abundant rather than scarce, the winners may not be those who built the best model first.

They may be those who made it available to everyone.

Source: Based on DeepSeek-V4 release materials, technical documentation, pricing disclosures, benchmark comparisons, and industry reporting (2026).