A new bipartisan bill in the United States is pushing artificial intelligence directly into the classroom—and it’s backed by some of the most powerful companies in the world. The proposed LIFT AI Act, introduced by Adam Schiff and supported by OpenAI, Google, and Microsoft, aims to embed “AI literacy” into K-12 education through federally funded programs, teacher training, and curriculum redesign.

At its core, the bill defines AI literacy as the ability to use AI tools effectively, interpret outputs critically, solve problems in an AI-enabled world, and understand associated risks. It proposes significant funding—reportedly up to $1 billion over five years—to develop teaching materials, evaluation tools, and hands-on learning experiences. The idea is simple: if AI is becoming foundational to the economy, students need to understand it early.

On the surface, the logic is hard to argue with. The U.S. labor market is already shifting, with strong growth projected in technology-related roles. Advocates believe that introducing AI concepts early could help close a widening skills gap and prepare students for a future where interacting with intelligent systems is as normal as using a smartphone. Teaching not just how to use AI—but how to question it—could also help mitigate risks like bias, misinformation, and over-reliance.

But there’s a deeper tension beneath the proposal. Education has historically lagged behind technological change, yet this bill risks swinging too far in the opposite direction—embedding a rapidly evolving technology into a system that moves slowly and unevenly. Critics argue that students and teachers are already overwhelmed by shifting digital tools, and that forcing AI into curricula may create more confusion than clarity, especially if educators themselves are not fully equipped to teach it.

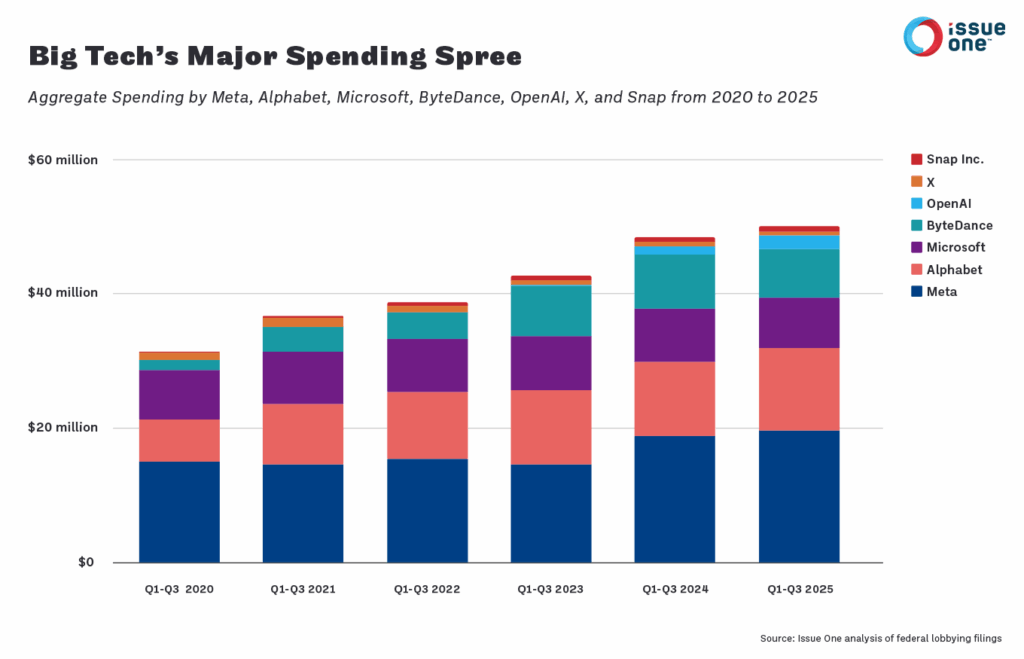

There is also the question of influence. When the same companies building AI systems are endorsing legislation that shapes how those systems are taught, it raises concerns about long-term control over educational narratives. Even if unintended, there is a risk that curricula could become aligned with specific platforms, tools, or ecosystems, rather than teaching foundational, platform-agnostic understanding.

The broader issue is not whether students should learn about AI—they should. The question is how. True AI literacy may not be about teaching students to use tools, but teaching them to think critically in a world where intelligence is increasingly externalized. That means understanding limitations, questioning outputs, and maintaining human judgment in systems that appear authoritative but are often probabilistic.

Ultimately, the LIFT AI Act reflects a larger shift: AI is no longer just a technology sector concern—it is becoming a societal one. As governments, companies, and educators race to define what AI literacy means, the risk is not that we teach it too early, but that we teach it too narrowly.

Source: U.S. LIFT AI Act proposal (2026), supported by OpenAI, Google, Microsoft, and industry groups; reporting inspired by Hacker News discussion and public policy summaries.